Agent-Based Modeling

The term agent is used today to mean anything between a mere subroutine to a conscious entity. There are "helper" agents for web retrieval and computer maintenance, robotic agents to venture into inhospitable environments, agents in an economy, etc. Intuitively, for an object to be referred to as an agent it must possess some degree of autonomy, that is, it must be in some sense distinguishable from its environment by some kind of spatial, temporal, or functional boundary. It must possess some kind of identity to be identifiable in its environment. To make the definition of agent useful, we often further require that agents must have some autonomy of action, that they can engage in tasks in an environment, independently or without external control. This is, in effect, a definition of agency directly related to the one put forward in the XIII century by Thomas Aquinas: an entity capable of election, or choice.

This is a very important definition indeed; for an entity to be referred to as an agent, it must be able to step out of the dynamics of an environment, and make a decision about what action to take next---a decision that may even go against the natural course of its environment. By this, simplistically, we mean that an agent (say in a steep incline) can opt to go uphill rather than roll with the force of gravity. Since choice is a term loaded with many connotations from theology, philosophy, cognitive science, and so forth, we prefer to discuss instead the ability of some agents to step out of the dynamics of its interaction with an environment and explore different behavior alternatives. In physics we refer to such a process as dynamical incoherence. In computer science, Von Neumann, based on the work of Turing on universal computing devices, referred to these systems as memory-based systems. That is, systems capable of engaging with their environments beyond concurrent state-determined interaction by using memory to store descriptions and representations of their environments. Such agents are dynamically incoherent in the sense that their next state or action is not solely dependent on the previous state, but also on some (random-access) stable memory that keeps the same value until it is accessed and does not change with the dynamics of the environment-agent interaction. In contrast, state-determined systems are dynamically coherent (or coupled) to their environments because they function by reaction to present input and state using some iterative mapping in a state space.

Fitness of a population of agents

Single run of the agent based model of genotype editing (ABMGE) on the dynamic Schwefel Function (dynamic severity 50, 1000 generations) when the fitness function changes every 100 generations (shown in the movie as a yellow Background).

Let us then refer to the view of agency as a dynamically incoherent system-environment engagement or coupling as the strong sense of agency, and to the view of agency as some degree of identity and autonomy in dynamically coherent system-environment coupling as the weak sense of agency. The strong sense of agency is more precise because of its explicit requirement for memory and ability to effectively explore and select alternatives. Indeed, the weak sense of agency is much more subjective because the definition of autonomy, a boundary, or identity (in a loop) are largely arbitrary in dynamically coherent couplings.

We have been working on various types of agent models that are either based on the strong sense of agency detailed above, or attempt to study the emergence of such agency from dynamically coherent environments. The strong sense of agency has been further detailed in an overview of agent models for a previous project of the modeling of socio-technical systems as well as in an overview of research on complex systems modeling. Examples of agent-based models we have worked on are: the simulations of evolving agents with different kinds of reproduction strategies using Fuzzy Development Programs, the agent-based model of genotype editing (summarized below), the evolving cellular automata and emergent computation experiments, the soft computing agents for recommendation systems (especially TalkMine), the immune-inspired spam detection algorithm, etc.

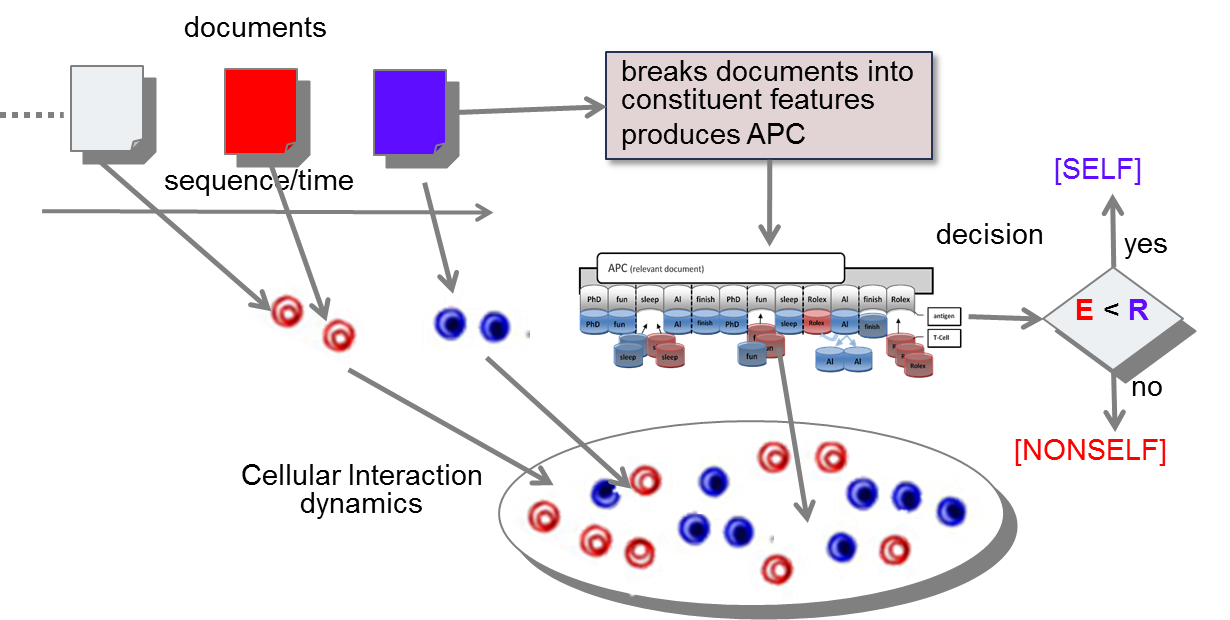

Artificial Immune Systems for Classification

We have developed a bio-inspired solution for binary classification of textual documents inspired by T-cell cross-regulation in the vertebrate adaptive immune system, which is a complex adaptive system of millions of cells interacting to distinguish between self and nonself substances [Abi-Haidar and Rocha, 2011]. In analogy, automatic document classification assumes that the interaction and co-occurrence of thousands of words in text can be used to identify conceptually-related classes of documents—at a minimum, two classes with relevant and irrelevant documents for a given concept (e.g. articles with protein-protein interaction information). Our agent-based method for document classification expands the analytical model of Carneiro et al, by allowing us to deal simultaneously with many distinct populations of antigen-specific T-Cells and their collective dynamics. We have extended this model to produce a spam-detection system. We have also developed our agent-based model further to apply it to biomedical article classification, testing it on a dataset of biomedical articles provided by the BioCreative 2.5 challenge (see references below). Our results are useful for biomedical text mining, but they also help us understand T-cell cross-regulation as a potential general principle of classification available to collectives of molecules without a central controller. While there is still much to know about the specifics of T-cell cross-regulation in adaptive immunity, Artificial Life allows us to explore alternative emergent classification principles while producing useful bio-inspired tools. Recently, we started expanding this algorithm to other forms of classification such as sensor data from human-robot interactions [Francisco, Wood, Sabanovic and Rocha, 2014].

Models of RNA Editing

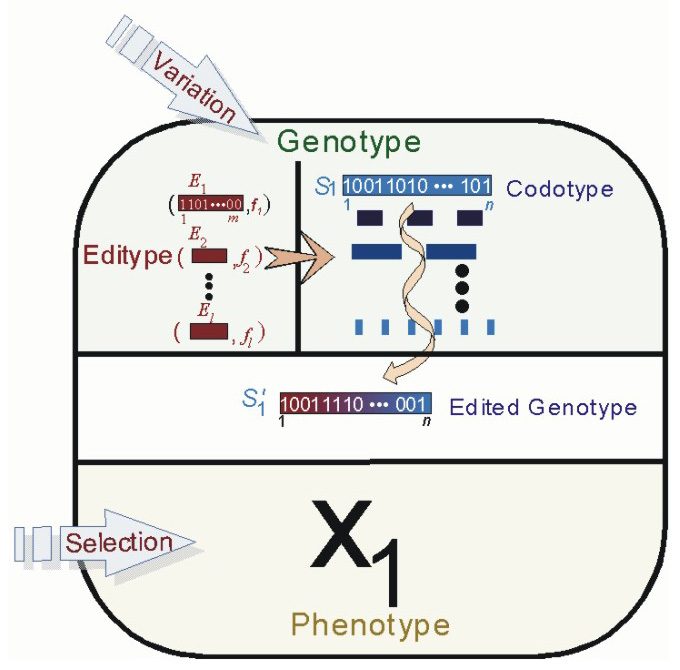

Evolutionary models in theoretical biology at large, and computational biology and artificial life in particular, rarely deal with ontogenetic, non-inherited alteration of genetic information because they are based on a direct genotype-phenotype mapping. In contrast, in Nature several processes have been discovered which alter genetic information encoded in DNA before it is translated into amino-acid chains. Ontogenetically altered genetic information is not inherited but extensively used in regulation and development of phenotypes, giving organisms the ability to, in a sense, re-program their genotypes according to environmental clues. An example of post-transcriptional alteration of gene-encoding sequences is the process of RNA Editing. Our agent-based model of genotype editing presents a novel architecture for evolving agents in which coding and non-coding genetic components are allowed to coevolve [Huang, Kaur, Maguitman, Rocha, 2007, and other refrences below]."]. Our goal is twofold: (1) to study the role of RNA Editing regulation in the evolutionary process, and (2) to investigate the conditions under which genotype edition improves the optimization performance of evolutionary algorithms. We have shown that genotype edition allows evolving agents to perform better in several classes of fitness functions, both in static and dynamic environments. We are also investigating the ways in which the indirect genotype/phenotype mapping resulting from genotype editing lead to a better exploration/exploitation compromise in the search process. In the past year we developed an entirely new modeling platform in Python to run experiments to explore the evolutionary advantages of RNA editing.

Some characteristics of our model of RNA Editing:

- Genome contains both coding and non-coding portions: Codome and Editome (Editosome)

- Agents with editome perform better in changing environments

- Study of regulation via non-coding DNA

- Observe emergence of regulation with promoter signals

- Memory of previous environments

- Bio-inspired algorithm for optimization

- Outperfoms traditional evolutionary algorithms on many classes of functions

Agent with separate codotype and editype components of their genotype in our Evolutionary Model of Genotype Editing. From Huang, Kaur, Maguitman, Rocha, 2007.

This research is described in greater detail in the separate Evolutionary Models of Genotype Editing page.

Funding Project partially funded by

- Indiana University Collaborative Research Grants 2013. Project title: “Social SLAM: Creating Dynamical Socio-Environmental Models for Mobile Robots”.

- IARPA Contract: Early Model-Based Event Recognition with Surrogates (EMBERS), 2012-2014.

Project Members (Current and Former)

Luis Rocha (PI)

Al Abi-Haidar

Chien-Feng Huang

Jasleen Kaur

Ana Maguitman

Manuel Marques Pita

Selma Sabanovic

Ian B Wood

Selected Project Publications

- A. Challa, D. Hao, J.C. Rozum, and L.M. Rocha.[2024]. "The Effect of Noise on the Density Classification Task for Various Cellular Automata Rules". ALIFE 2024: Proceedings of the 2024 Artificial Life Conference. MIT Press. pp. 83. DOI: 10.1162/isal_a_00823.

- M.R. Francisco, I. Wood, S. Sabanovic and L.M. Rocha [2014]. "Designing a minimalist socially aware robotic agent for the home". Artificial Life 14: Proceedings of the Fourteenth International Conference on the Synthesis and Simulation of Living Systems: 876-883, MIT Press

- A. Abi-Haidar [2011]. "An adaptive document classifier inspired by T-Cell cross-regulation in the immune system" (pdf). PhD Dissertation, Indiana University

- A. Abi-Haidar and L.M. Rocha [2011]. "Collective Classification of Textual Documents by Guided Self-Organization in T-Cell Cross-Regulation Dynamics". Evolutionary Intelligence. 4(2):69-80. DOI: 10.1007/s12065-011-0052-5.

- A. Abi-Haidar and L.M. Rocha [2010]. "Collective Classification of Biomedical Articles using T-Cell Cross-regulation". In: Artificial Life XII: Twelfth International Conference on the Simulation and Synthesis of Living Systems. H. Fellermann et al et al (Eds.). MIT Press, pp. 706-713.

- A. Abi-Haidar and L.M. Rocha [2010]. "Biomedical Article Classification Using an Agent-Based Model of T-Cell Cross-Regulation". In: Artificial Immune Systems: 9th International Conference, (ICARIS 2010). E. Hart, C. McEwan, J. Timmis, and A. Hone (Eds.) Lecture Notes in Computer Science. Springer-Verlag, 6209: 237-249. Recipient of Best Paper Award. for ICARIS 2010

- A. Abi-Haidar and L.M. Rocha [2008]. Adaptive Spam Detection Inspired by a Cross-Regulation Model of Immune Dynamics: A Study of Concept Drift". In: Artificial Immune Systems: 7th International Conference, (ICARIS 2008). Bentley, Peter; Lee, Doheon; Jung, Sungwon (Eds.) Lecture Notes in Computer Science. Springer-Verlag, 5132: 36-47.

- A. Abi-Haidar and L.M. Rocha [2008]. "Adaptive Spam Detection Inspired by the Immune System". In: Artificial Life XI: Eleventh International Conference on the Simulation and Synthesis of Living Systems. S. Bullock, J. Noble, R. A. Watson, and M. A. Bedau (Eds.). MIT Press, pp. 1-8.

- L.M. Rocha and J. Kaur [2007]."Genotype Editing and the Evolution of Regulation and Memory". Proceedings of the 9th European Conference on Artificial Life. Lecture Notes in Artificial Intelligence (LNAI), 4648: 63-73 (Springer-Verlag).

- C. Huang, J. Kaur, A. Maguitman, L.M. Rocha[2007]."Agent-Based Model of Genotype Editing". Evolutionary Computation, 15(3): 253-89.

- Rocha, L.M., A. Maguitman, C. Huang, J. Kaur, and S. Narayanan. [2006]."An Evolutionary Model of Genotype Editing". In: Artificial Life 10: Tenth International Conference on the Simulation and Synthesis of Living Systems. L.M.Rocha, L. Yaeger, M. Bedau, D. Floreano, R. Goldstone, and A. Vespignani (Eds.). MIT Press, In Press.

- Rocha, Luis M. and W. Hordijk [2005]. “Material Representations: From the Genetic Code to the Evolution of Cellular Automata”. Artificial Life. 11 (1-2), pp. 189 – 214

- Rocha, Luis M. [2001]."Evolution with Material Symbol Systems." Biosystems. Vol. 60, pp. 95-121.

- Joslyn, Cliff and Luis M. Rocha [2000]. "Towards Semiotic Agent-Based Models of Socio-Technical Organizations". Proc. AI, Simulation and Planning in High Autonomy Systems (AIS 2000) Conference, Tucson, Arizona, USA. ed. HS Sarjoughian et al., pp. 70-79

- Rocha, Luis M. [2000]. "Syntactic autonomy, cellular automata, and RNA editing: or why self-organization needs symbols to evolve and how it might evolve them". In: Closure: Emergent Organizations and Their Dynamics. Chandler J.L.R. and G, Van de Vijver (Eds.) Annals of the New York Academy of Sciences. Vol. 901, pp 207-223.

- Rocha, Luis M. [1999]. "Complex Systems Modeling: Using Metaphors From Nature in Simulation and Scientific Models". IN: BITS: Computer and Communications News. Computing, Information, and Communications Division. Los Alamos National Laboratory. November 1999.

- Rocha, Luis M. [1999]. "From Artificial Life to Semiotic Agent Models: Review and Research Directions". Los Alamos National Laboratory Technical Report: LA-UR-99-5475.

- Rocha, Luis M. and Cliff Joslyn [1998]. "Simulations of Evolving Embodied Semiosis: Emergent Semantics in Artificial Environments". Simulation Series; Vol. 30, (2), pp. 233-238.

- Rocha, Luis M. [1998]. Syntactic Autonomy. In: Proceedings of the Joint Conference on the Science and Technology of Intelligent Systems (ISIC/CIRA/ISAS 98). National Institute of Standards and Technology, Gaithersburg, MD. IEEE Press, pp. 706-711.